UN Chief Calls For Ban Of AI Tech Posing Human Rights Risks

Some Artificial intelligence systems affect people's right to privacy as well as rights to health, education, freedom of movement, and more.

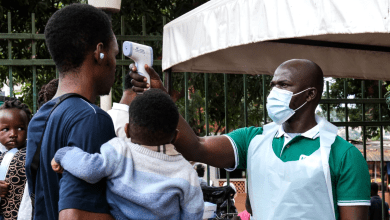

Michelle Bachelet, the United Nation High Commissioner for Human Rights, has called for the moratorium (suspension or ban) —until adequate safeguards are put in place— on the sale and use of Artificial Intelligence (AI) technology posing human rights risk, including the state use of facial recognition softwares.

According to an ABC report, the UN Human Rights Chief made the plea on Wednesday, considering the rapid rate at which AI develops, despite the raging concerns and myriads of privacy, racial biases that come with the emerging technology.

Bachelet’s warnings followed a report released by the UN Human Rights Office analysing how AI systems affect people’s right to privacy —as well as rights to health, education, freedom of movement and more.

“Artificial intelligence can be a force for good, helping societies overcome some of the great challenges of our times. But AI technologies can have negative, even catastrophic effects if they are used without sufficient regard to how they affect people’s human rights.”

“Artificial intelligence now reaches into almost every corner of our physical and mental lives and even emotional states,” Bachelet added.

While the report did not cite specific softwares, it nonetheless called for the ban of AI applications “that cannot be operated in compliance with international human rights law as such AI systems are used to determine who gets public services, decide who has a chance to be recruited for a job, and of course they affect what information people see and can share online.”

The report warned of the dangers of implementing the technology without due diligence, citing cases of people being wrongly arrested because of flawed facial recognition tech or being denied social security benefits because of the mistakes made by these tools.

The report called for a moratorium on the use of remote biometric recognition technologies in public spaces —at least until authorities can demonstrate compliance with privacy and data protection standards and the absence of discriminatory or accuracy issues.

Considering the 2019 study from the U.S. Department of Commerce’s National Institute of Standards and Technology which found that a majority of facial recognition software on the market had higher rates of false positive matches for Asian and Black faces compared to white faces, and the separate 2019 study from the U.K. which also found that 81 per cent of suspects flagged by the facial recognition technology used by London’s Metropolitan Police force were innocent, Bachelet’s report slammed the lack of transparency around the implementation of many AI systems, and how their reliance on large data sets can result in people’s data being collected and analysed in opaque ways as well as result in faulty or discriminatory decisions.

“Given the rapid and continuous growth of AI, filling the immense accountability gap in how data is collected, stored, shared and used is one of the most urgent human rights questions we face,” she said.

“We cannot afford to continue playing catch-up regarding AI — allowing its use with limited or no boundaries or oversight, and dealing with the almost inevitable human rights consequences after the fact,” Bachelet said, calling for immediate action to put “human rights guardrails on the use of AI.”

Evan Greer, the director of the nonprofit advocacy group ‘Fight for the Future’ said “the report is a welcome development especially that it further proves the “existential threat” posed by this emerging technology.”

“This report echoes the growing consensus among technology and human rights experts around the world: artificial intelligence powered surveillance systems like facial recognition pose an existential threat to the future of human liberty, like nuclear or biological weapons, technology like this has such an enormous potential for harm that it cannot be effectively regulated, it must be banned,” Greer said in the ABC news report.

“Facial recognition and other discriminatory uses of artificial intelligence can do immense harm whether they’re deployed by governments or private entities like corporations,” Greer added. “We agree with the UN report’s conclusion: there should be an immediate, worldwide moratorium on the sale of facial recognition surveillance technology and other harmful AI systems.”

Support Our Journalism

There are millions of ordinary people affected by conflict in Africa whose stories are missing in the mainstream media. HumAngle is determined to tell those challenging and under-reported stories, hoping that the people impacted by these conflicts will find the safety and security they deserve.

To ensure that we continue to provide public service coverage, we have a small favour to ask you. We want you to be part of our journalistic endeavour by contributing a token to us.

Your donation will further promote a robust, free, and independent media.

Donate HereStay Closer To The Stories That Matter